I couldn’t make it to APIC this year, but I have picked out a few highlights. More than 300 abstracts were presented so I can only scratch the surface here, but the good news is that they’re all available in an AJIC supplement.

I couldn’t make it to APIC this year, but I have picked out a few highlights. More than 300 abstracts were presented so I can only scratch the surface here, but the good news is that they’re all available in an AJIC supplement.

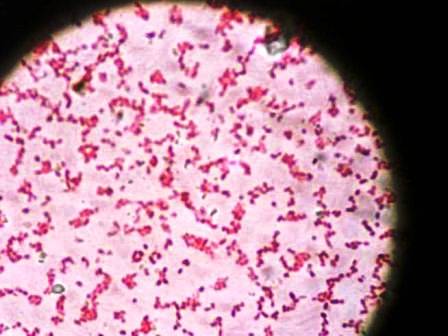

Multidrug-resistant Gram-negative rods

One of the oral presentations was on controlling CRE in Texas (Cifelli et al). The interventions comprised improvements in lab identification and patient electronic tagging, and front-line infection prevention and control practices (dedicated rooms, equipment and staff etc). It’s difficult to know which of these approaches (if any!) made the difference: we still don’t know what works to control CRE.

A group from Louisville explored transmission of CRE in an LTAC (Kelley et al). LTACs have previously been shown to be a hotbed for CRE transmission in some parts of the USA. They found that almost half of patients that acquired CRE were admitted to beds that had been previously occupied by a CRE patient, which brings a new meaning to ‘hotbed!’ This links in with previous studies showing that admission to a room previously occupied by a patient with MDROs is a risk factor for acquisition. It also shows that CRE (K. pneumoniae at least) can survive for long enough on surface to bring indirect transmission via environmental contamination into play.

Definitions and terminology surrounding CRE and MDR-GNR in general are in a state of confusion. Both require urgent clarification. A survey of 79 hospitals by Jadin et al for their definitions of MDR-GNR yielded virtually 79 different definitions! This makes it challenging for facilities to communicate clearly about MDR-GNR, since what qualifies as MDR-GNR may not make the cut in another hospital. And this is not even accounting for variations in lab diagnostics!

A small prevalence survey of CRE carriage in Michigan by Berriel-Cass et al found that 2 (3.8%) of 53 patients were colonized. Neither patient had history of CRE, but one who did have a history of CRE screened negative! It’s difficult to know who is at high risk for CRE carriage, and even more difficult to know how long they will carry it for. However, we probably know enough to conclude that “once positive always positive” is a sensible (if somewhat conservative) approach.

The rest

A fascinating study from Arizona by Sifuentes et al evaluated a hygiene intervention in a LTCF. A number of bacteriophages were used as markers for pathogenic virus transmission and inoculated onto hands and surfaces. The viruses spread rapidly throughout the faculty over a short time period (measured in hours), and a hygiene intervention significantly reduced the level of contamination of hands and surfaces. Most similar work has been performed in the acute setting, so some data from the non-acute setting is particularly welcome. This study illustrates the dynamic interplay between hand and surface contamination. In a way, hands are just another highly mobile fomite that are not disinfected frequently enough!

Jinadatha et al performed a very timely study exploring whether serial passage of bacteria with sub-lethal UV exposure prompts reduced susceptibility to UV. The study demonstrates that 25 serial exposures to UV did not affect bacterial UV susceptibility. However, the study did not explore whether other useful mutations may have occurred in the “survivors”; perhaps this is a job for whole genome sequencing in a follow-up study?

Faecal microbiota transplantation (FMT) is quickly becoming the standard of care for recurrent CDI. A study by Greig et al tells the story of implementing a FMT programme. The literature for FMT are impressive, but the ‘nuts and bolts’ of implementation are challenging. Where do you get the donor stool form? How do you screen the donors? Who performs the procedure? Who pays? Will it work here? Are just some of the questions that need to be negotiated for successfully implementing an FMT programme. The message from this study: it’s worth it – 83% of patients with recurrent CDI had resolved within 30 days.

Finally, I remain rather skeptical that “CA-CDI” is really on the rise. I may have to revise my opinion based on this abstract by Rogers and Rosacker, showing that a community-based educational intervention reduced the rate of CA-CDI!